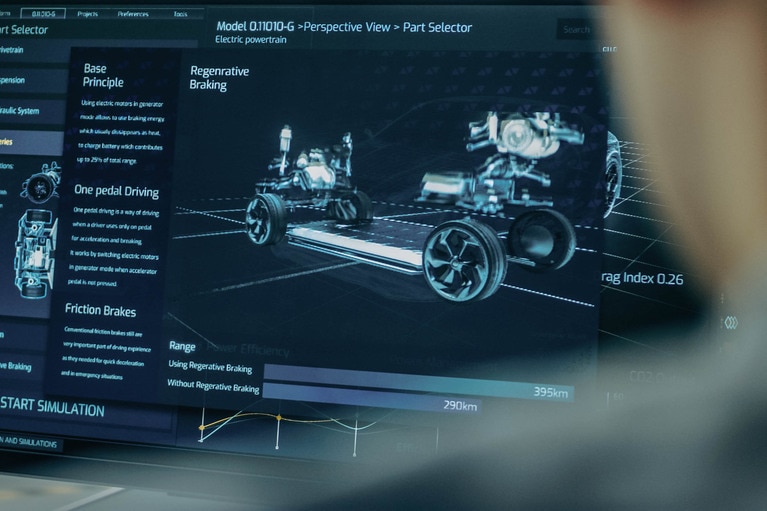

48V instead of 12V: DC‑DC converters in focus of the SDV

Future vehicles use 12V, 48V, and HV rails. Vicor experts discuss the vital role of DC-DC converters in SDVs, focusing on energy efficiency and safety

Vicor 应用工程总监 Paul Yeaman 与特斯拉工程师 Milovan Kovacevic 的播客

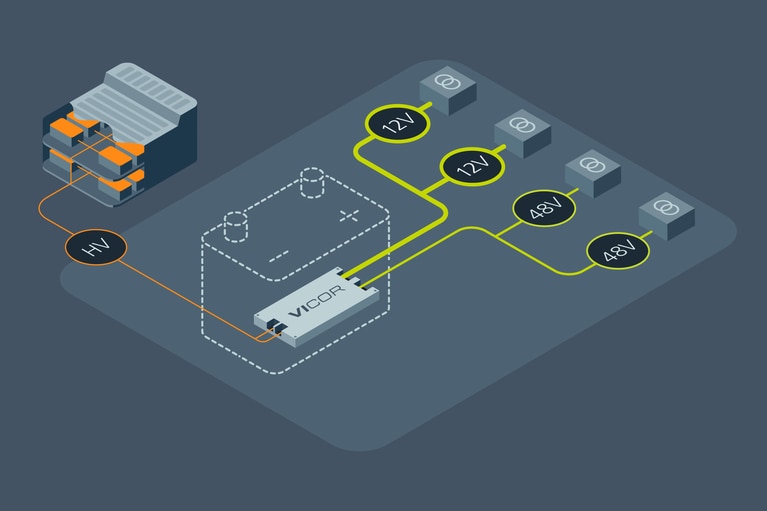

随着汽车电气化程度的提高,电源系统设计的重视程度也得到了前所未有的提升。一辆电动汽车的耗电量比传统内燃机汽车高 20 倍。这一功耗的增加,需要增加电源电子产品的相应尺寸和重量,这将限制汽车的行驶里程。Vicor 高功率密度解决方案可通过使用紧凑型高功率密度模块,大幅降低电源电子产品的尺寸,使其比同类竞争产品小 60-70%,从而可为供电网络节省空间和重量。

在本次 Moore's Lobby 播客节目中,主持人 Dave Finch 与 Vicor 应用工程总监 Paul Yeaman 以及特斯拉工程师 Milovan Kovacevic 深入探讨了电源系统设计注意事项,以及特斯拉在汽车电气化方面所面临的挑战。

DAVE FINCH: 欢迎回到 The Lobby。如果成功的汽车电源电子设计取决于电源质量、热管理性能、噪声特性和尺寸,可能就有必要了解电源模块的优缺点。Vicor 应用工程总监 Paul Yeaman 针对高低压汽车电子产品介绍了这些符合规范要求的高效率解决方案。

当我开始思考,假设我们通过一个电压极高的电池开始启动,我们要将电压降压转换至每秒 48V,可能是12V,然后继续向下转换,但我马上开始意识到:天啊!每次这么操作时,都会有损耗。对于电动汽车应用,我开始考虑会导致汽车更短的行驶里程。我也开始考虑额外的重量问题。我注意到,你们的开关转换器达到了 96%、97% 以上的效率。

PAUL YEAMAN: 是的。首先,使用更高电压(48V,而不是 12V)的原因之一是为了限制配电损耗,即通过汽车底盘或任何类型的系统提供大电流而产生的电阻损耗。

改用更高电压的问题是,你本质上需要降压转换至所需电压,因此重要的是,你的转换损耗必须小于在低压、大电流的配电损耗。这实际上代表了一种选择,是在整个系统中使用 12V 的电压,还是在 48V 存能并降压转换为 12V。也就是说,在什么情况下一种方式比另一种方式更加合理。

再多聊一聊效率为 97-98% 的转换器。从根本上推动技术实现越来越高的效率,是其中的一种方法,这种方法更有利于转换,而非配送更低电压。现在,在某些方面,电源转换技术的有些东西是永远也不会改变的。其中一个就是铜箔。没有比铜效率更高的材料。

而有些产品正在迅速改变。我认为 MOSFET 就属于这一类。如果以硅芯片 MOSFET 为例,然后看看一些后续几代硅芯片 MOSFET,你会发现,它们一如既往地得到更低 Rd (on) 和更低栅极电荷,这就意味着在高效转换方面有更高的灵敏度。

如果你再看看新兴技术,比如碳化硅,你会发现这类新兴宽带隙器件在性能方面有显著进步,我相信你已经听说过很多了,而且我相信你已经邀请过播客嘉宾来讨论了很多宽带隙技术的优势。所以,在 MOSFET 方面,有很多的创新和发展。

Vicor 与之相匹配的地方,也就是 Vicor 研究创新的地方是在拓扑层面。换句话说,我们将充分利用后续每一代更好的新兴 MOSFET。但我们也在研究如何更高效地将电力从一种电压转换为另一种电压或对其进行稳压。我们在关注电源拓扑的控制,比如零电压开关和零电流开关,这些都是控制技术,允许你在可最小化开关损耗的位置开启和关闭 MOSFET。

类似铜箔和 MOSFET 的矛盾,磁性材料也有这种问题。有一些关于磁性材料的东西是不会改变的。它们是材料本身固有的。但与此同时,在材料方面还有很大的创新空间,我们可以开发出更好的磁性材料,代表着更低损耗,或者可在更高开关频率下提供更低损耗等等。

DAVE FINCH: 是的,你提到了更高开关频率,这很有趣,因为这也让我想知道咱们是如何使用咱们的调制方法的,例如,从电路中收集某种反馈来做出非常及时的反应和调整。只考虑组件的原始物理特性时,这类情况是否会起作用,还是说可以忽略不计?

PAUL YEAMAN: 好的,这个问题问得很好。关于高开关频率的问题是,高开关频率实际上可以追溯到铜箔困境,对吧?同样,我们不会提高铜箔的效率。我们能否从根本上解决这一难题的关键是,我们能否构建一款电源转换器,可使用较少的铜箔将电力从输入端传到输出端。我们能让转换器更小、更高效吗?

因为如果你考虑,例如,一个缠绕着电感器或变压器的线圈,线圈的整个长度都是铜的。该线圈的长度与其将具有的电阻成正比,而且电阻实质上是一种我们无法改变的物理因素。我们无法改变铜的电阻系数。但如果我们能让该电感器或变压器变小,我们就能让该长度(线圈的路径长度)变短。由此我们可以构建一款电阻损耗更低的系统,即使我们仍然使用相同的材料来传输电力也没问题。

高开关频率是构建更小转换器的关键,因为如果使用更高的开关频率,你的变压器、电感器和任何保存感应能量的器件等储能元件均可缩小,而且其中的电容器也可缩小。开关频率更高,而且能够缩小器件,这就意味着能够使它们更小,也就是说能够使用更少的铜箔并具有更低的电阻损耗。

更高的开关频率带来的问题是,开关频率越高,产生的开关损耗就越多。以更快的速度打开和关闭 MOSFET,如果系统中有大量开关损耗,你从更小部件获得的任何增益都会快速被抵消。

这就是Vicor 研究推动零电压及零硬开关的控制技术与拓扑的原因,因为如果能限制或消除电源转换器的开关损耗,就解决了开关频率和开关损耗之间的矛盾。然后你就可以缩小产品尺寸,并获得效率优势。在电源电子产品领域面临这种问题时,这是一个完美的例子。

DAVE FINCH: 的确如此。在不同的频率下,你是否发现噪声会在特定频率范围内放大或最小化,亦或者它只是不受频率影响的开关噪声?

PAUL YEAMAN: 所以某些类型的噪声,例如,另一个表示零电压和零硬开关的术语是软开关,对吧?我想这背后的物理原因是,如果你采用的是硬开关,尽可能保持低开关损耗的方法就是尽快打开和关闭开关。

换句话说,如果你把 MOSFET 看作可变的电阻,当 MOSFET 完全打开时,电阻非常低,你的损耗也就非常低。当 MOSFET 关闭时,没有电流流过,电阻很高。这里的损耗为零。在这段时间内,MOSFET 从一个电阻过渡到另一个电阻,就会出现大部分损耗。所以,如果你对一个器件执行硬开关而且你没有采用零电压或零硬开关,就得尽快通过该区域。

任何时候你拿到一个带电的东西,通过电路中的电感和寄生效应将电压或电流快速从一个点发射到另一个点时,实际上就是将能量发射到系统的其它区域。这实际上就是电磁干扰或传导噪声的起源。

根据定义,如果你硬开关某些器件,就会有更多与之相关的噪声。在你对某个器件执行软开关时,首先,如果其两端的电压为零,就不需要快速改变电压或电流,因为本质上在那个点,在那个零电压点上没有电压,没有强制通过该可变电阻的电流。这可能是一种比较简化的理解方法,但我觉得其实还很贴切。

因此从根本上讲,我们说的软开关实际上就是讨论慢开关的问题。慢速开关时,就不会有寄生尖峰电压或电流,它们不仅会喷射到系统的其它区域,而且还会引起噪声。

这并不是说任何软开关转换器都完全没有噪声。我是说还有其它噪声來源。但是如果你用一个硬开关转换器和一个零电压开关转换器并比较这两款转换器的噪声特征,你会发现软开关和硬开关带来的结果会有很大的不同。

DAVE FINCH: 你在之前的交谈中提到了一件事,那就是电源质量。无论作为 Vicor 的员工,还是一名电源管理工程师,你在解决哪类电源质量问题?

PAUL YEAMAN: 是的,所以我认为有很多不同的东西适用于不同的行业。我提到电源质量时,我实际上指的可能是很多不同的东西。

其中之一是 EMI,也就是说电源有多清洁?在你的电源转换器工作时,有多少噪声耦合在你的无线电中,或者有多少噪声干扰了 Wi-Fi 信号等,大家知道,这对系统其余部分功能而言,真的是非常重要。

但我指的也是负载处理电压变化的能力。回到我们之前的话题,从 48V 到 12V 的降压转换与只在系统中使用 12V 配电之间的界线在哪里,应该怎样划分?其中之一是,整个系统中配送的电流增加而电源质量降低时,能够提供抗干扰电压就有很多优势。电源质量的一个关注点是能够开发一个可提供极具抗扰性电压电源的系统,其可处理负载提供的任何类型的电压转换率。

例如,我们先暂停一会儿汽车的话题,了解一下计算机方面的事情,微处理器需要能在几微秒内处理数万安培电流变化的电压源。这种电压转换率要求在极高的频率下提供极低的阻抗。负载阶跃 70mV 的电压偏差与 75mV 之间的差异,可能就是正常工作的处理器与死机蓝屏之间的差异。在这种情况下,电源质量非常重要,你需要一个电源,既可为任何负载供电,也可为负载可能遇到的任何瞬态供电。

DAVE FINCH: 我接下来提出的问题是热管理必须能够管理这样的动态负载。对于你们生产的模块来说,这又是一个很好的例子,比如,伙计,他们是如何把这么高的性能塞到如此紧凑、轻量级的产品中,却没有带来困扰大量系统的散热问题?

PAUL YEAMAN: 好的,这个问题问得很好。我认为这可能有两个因素。其中一个因素可归结于尺寸。你构建的器件越小,就可以把热源放得离能够将这种热量从器件中传导出去的表面越近。因此,你可以考虑将电源放在一个庞大盒子里,MOSFET 埋在这个位于印刷电路板上的盒子中。你需要将其连接在散热片上,然后需要提供一款能让气流通过该散热片的风扇,把 MOSFET 的热量散发出去。如果你看看 Vicor 是如何最大限度缩小电源组件尺寸的,你就会发现,现在我们在处理电源的 MOSFET 和我们的封装或组件的表面之间有 1 毫米的间隔。

第二个是,我猜你会说,母性和苹果派,也就是说,如果你做了一款更高效的电源转换器,首先你需要去除的热量就更少了。这是另一种更直接解决热问题的方法,一开始就降低损耗。

DAVE FINCH: 对的。你知道,让我震惊的是,使用像模块这样的解决方案的真正强大之处在于,似乎你再也不用成为一个拥有终身专业技术的人,也能获得抗扰度极高、响应性很强的极清洁电源。

不过第二点是,该解决方法必须获得某些认证。这似乎也是一种优势。如果你是一位设计工程师,你可买一款模块,所有这些设计专业技术的生命周期都融入到该模块中,而且经过认证。(笑声)所以一开始这看起来就像是一个极具诱惑力的提议。

PAUL YEAMAN: 是的。我的意思是,我喜欢这样看或者说我喜欢这样想,那是我们客户的工程师——我们并不是要抢他们的饭碗。我们正在努力做的工作是,我们正在解决,并且非常有效地解决电源挑战,否则他们就得随时自行解决这些问题。客户不用白费力气做重复工作,他们可专注于他们在其系统中遇到的独特挑战,而不必担心这种可以解决的电源转换,这些挑战可以在全球范围内解决并真正适用于各种系统。

你完全是对的,汽车电源与微处理器电源或工业机器人电源相比,需要达到不同的标准。这些标准将各不相同,而且也是行业独有的。但从某种程度上讲,所有这三个行业都需要电压、电流以及一定程度的电源、电源质量和清洁度以及抗扰性,这样才能让我们解决整个电路板上这些全局性挑战,因此,客户才能集中精力解决对其系统及行业都非常特别的挑战。

DAVE FINCH: 再次真诚地感谢我们的嘉宾 Milovan Kovacevic 和 Paul Yeaman,感谢你们本周从家里的办公室来到这里,让我对电源电子产品有了更多的认识。

Paul Yeaman 与行业中的技术领导者广泛合作,开发和实施了系统中领先的电源解决方案,这些解决方案满足行业中最严苛的电源需求。由于经常接触新技术带来的电源挑战,Paul 了解电源行业的广泛趋势,并致力于确保创新者能够整合电源解决方案以满足这些需求。Paul 在电力电子行业的设计和应用工程领域有 20 多年的经验。

Paul Yeaman, 应用工程高级总监

本播客最初由 All About Circuits 发表

48V instead of 12V: DC‑DC converters in focus of the SDV

Future vehicles use 12V, 48V, and HV rails. Vicor experts discuss the vital role of DC-DC converters in SDVs, focusing on energy efficiency and safety

汽车电子正如何发展?

如今,汽车供电网络的征税比以往任何时候都要高。了解 Vicor 如何助力设计更高效的电力系统

2026 年汽车工程展览会

以最高效率和最高功率密度电源模块,应对汽车电气化挑战

面向未来的汽车高压转 SELV 方案

传统的 12V 架构已无法承受车载电子负载的不断增加。了解电源模块如何加速向 48V 的转型